Welcome to the AI Score website

"One reproducible test, one global score: compare chatbots fairly and instantly with the AI Score."

Context?

AI is transforming education and society — but not all educational chatbots are created equal. With dozens of platforms emerging and constant model updates, one crucial question remains:

Which chatbot can I trust to guide my students?

The AI Score was created to answer exactly that.

What is the AI Score?

The AI Score is a scientifically grounded, reproducible evaluation method designed to measure the pedagogical reliability of conversational agents used as educational chatbots.

It condenses complex AI behaviors into one clear, objective grade based on four key dimensions:

-

Initial Performance (IP)

How often the chatbot gives the right answer on the first try. -

Robustness (R)

Whether it maintains its answer when questioned. -

Self-Correction Ability (SCA)

Its capacity to fix its mistakes when challenged. -

Lack of Reliability (LR)

How often it contradicts itself, loses context, or bends to user pressure.

Why we created it?

Educational chatbots are everywhere: ChatGPT, Copilot, Mistral, Grok, NotebookLM, Claude, and countless custom classroom bots. But despite their promises, they also bring:

- Hallucinations and incorrect explanations

- Inconsistent answers

- Context loss

- Overly confident errors

- Huge differences in quality across platforms

Teachers deserve transparent, evidence-based guidance — not guesswork.

A research-backed answer

The AI Score was born from this need for clarity. Developed by university researchers, the framework provides:

- An objective way to compare chatbots

- A reproducible test

- A common language for discussing AI reliability in education

What the AI Score brings to educators

Trustworthiness

You know what the chatbot is likely to get right — and wrong.

Comparability

Platforms can finally be evaluated on equal footing.

Safety

You reduce the risk of deploying unstable or misleading AI to students.

Version tracking

You can check for regressions after model updates or prompt changes.

Who are we?

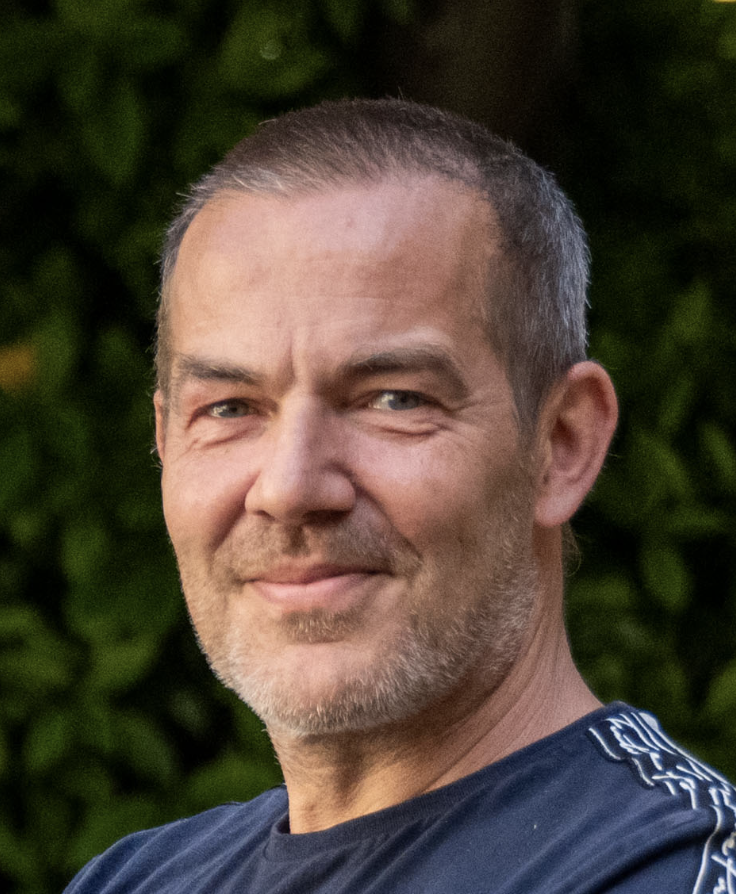

Prof. Michaël Lobet

Prof. Michaël Lobet is a research associate of the Fonds de la Recherche Scientifique de Belgique (F.R.S-F.N.R.S) based at the University of Namur – physics department - as well as an associate at Harvard University. He holds a PhD in quantum mechanics and photonics as well as a master in pedagogy of higher education.

Dr. Miguel Dhyne

Dr. Miguël Dhyne is a Belgian physics educator and researcher, an expert in pedagogical innovation, EdTech, and educational AI. He develops practical solutions and trains teachers to use digital tools and AI effectively. He champions an education vision that is inclusive, accessible, and grounded in real-world needs. He is a scientific collaborator at the University of Namur.

Laurence Dumortier

Laurence Dumortier holds a PhD in Mathematical Sciences from the University of Namur. Since 2000, she has been working as an IT specialist at the TICE unit (UNamur/FaSEF), where she co-administrates the institutional LMS and helps teachers master the use of technology in education.

Jean-Roch Meurisse

After 4 years as a teaching assistant at the Faculty of Computer Science at the University of Namur, Jean-Roch Meurisse joined the TICE Unit (UNamur/FaSEF) where he focuses on the co-administration and evolution of the institutional LMS. He also assists university teachers in choosing, implementing or developing digital tools for teaching.